In Part 1 of the Diffusion Models series, I covered the theory behind DDPM, the most basic diffusion model, which consists of:

- a forward process that gradually corrupts an image with Gaussian noise,

- a reverse process where a neural network U-Net learns to denoise step by step.

If you got through all the math needed to understand DDPM, were not scared by it, and are in fact even more fascinated about diffusion models, the next (and hopefully easier to digest) paper is:

Improved Denoising Diffusion Probabilistic Models (Nichol & Dhariwal, 2021), as the name suggests - is an improved version of DDPM, follows that framework, but improves it in 3 main ways:

a cosine noise schedule that uses timesteps more effectively (for which I have actually covered both the theory and code in Part 1 - since I found it to be a marginal change that I could easily implement in my DDPM pipeline),

learned reverse-step variance that boosts the log-likelihood and sample quality,

a hybrid loss that combines both the MSE loss we saw in DDPM, and the negative variational lower bound (VLB) loss.

This blog post will be short since we have covered the bulk of the theory in part 1!

Table of Contents

- Motivations for the Improved DDPM paper

- The cosine schedule

- Learned variance in the reverse process

- Hybrid training objective

Section 3: Recap & What’s Next

Section 1: Theory

1. Motivations for the Improved DDPM paper

Improved DDPM was motivated by two limitations of the original DDPM paper, which the DDPM authors themselves acknowledged:

$^1$What are FID and NLL?

FID (Fréchet Inception Distance) and NLL (Negative Log-Likelihood) are distinct metrics:

- FID measures perceptual similarity to real data (lower is better) → FID aligns more with human visual judgment.

- NLL measures how well the model predicts the data distribution (lower is better) → NLL reflects probabilistic fit.

Shortcoming #1 (likelihood gap): Despite producing high-quality samples, DDPM failed to achieve competitive log likelihoods compared to other likelihood-based generative models (VAEs, autoregressive models, etc.). The authors largely evaluated DDPM with sample-quality metrics such as FID rather than NLL$^1$, so the likelihood question remains open.

Shortcoming #2 (slow sampling / inefficient steps): Generating a single sample from DDPM requires hundreds of sequential forward passes through the network - i.e. the image generation process is too slow for practical use, and many late timesteps seem to do very little useful work.

In response, Improved DDPM introduces two main techniques, each targeting one of these shortcomings:

1. Learned reverse-step variance + hybrid loss (for Shortcoming #1):

DDPM authors tried fixing the reverse variance to either $\sigma^2_t = \beta_t$ (the forward process variance) or $\sigma^2_t = \tilde{\beta}_t$ (the true posterior variance, a lower bound corresponding to $x_0$ being a delta function) for the reverse-step variance and found similar sample quality either way. So they concluded the choice didn’t matter much and simply kept the variance fixed.

Improved DDPM treats this as a missed opportunity for likelihood. It parameterizes the model variance $\Sigma_\theta(x_t, t)$ and trains it with a small VLB term$^2$ added to the usual noise-prediction loss, with extra weight on early timesteps where the VLB contributes most.

This directly improves the variational bound and therefore improves NLL, while keeping or slightly improving FID.

$^2$What is VLB?

variational lower bound (VLB) is a tractable lower bound on the log-likelihood $\log p_\theta(x_0)$, which is what we ultimately want to maximize but can’t compute directly. It decomposes into a sum of per-step KL divergence terms - one for each reverse step - measuring how well the estimated $p_\theta(x_{t-1}|x_t)$ matches the true posterior $q(x_{t-1}|x_t, x_0)$. Maximizing the VLB is equivalent to minimizing those KL terms, pushing the learned reverse process as close as possible to the true (but intractable) reverse distribution.

2. Cosine schedule (for Shortcoming #2, partially).

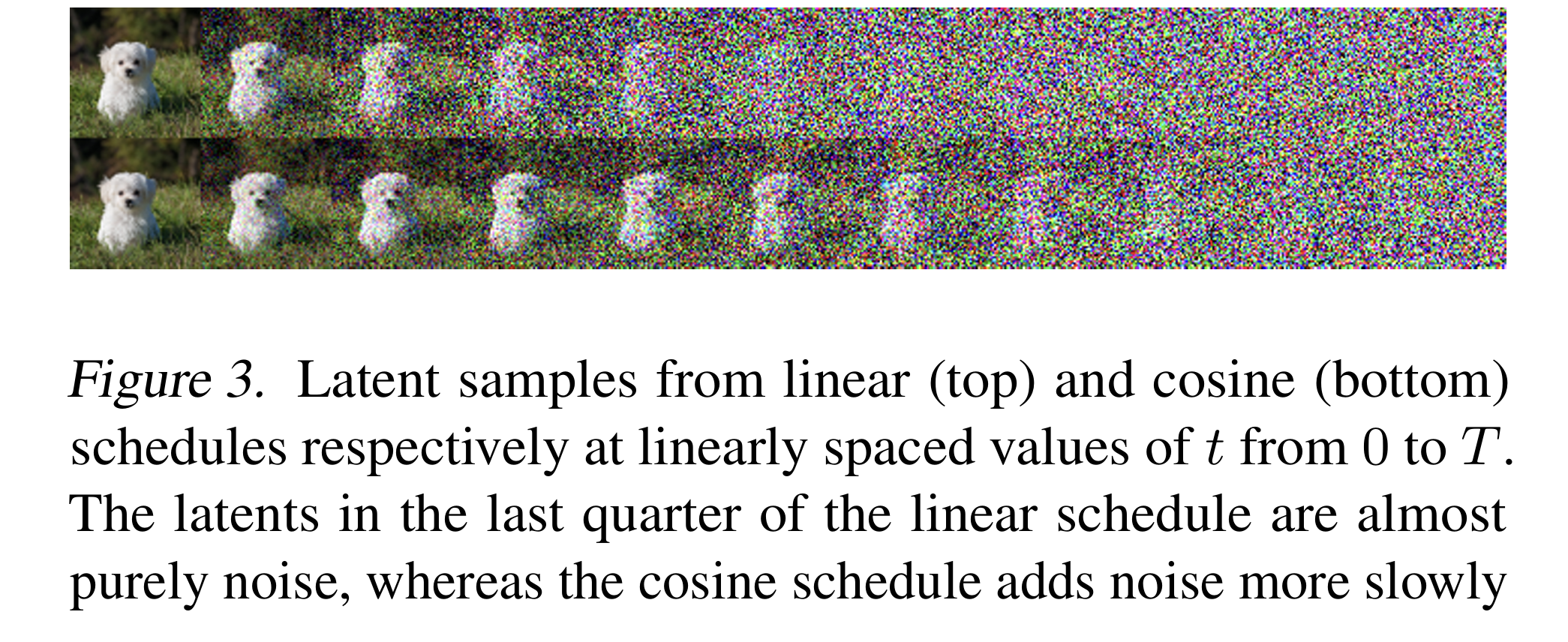

With the linear schedule, the image becomes almost pure noise well before $t = T$, so many late timesteps contribute little information.

Improved DDPM proposes a cosine schedule for $\bar{\alpha}_t$ that decays more smoothly, so each timestep sees a more useful noise level. They show that with this schedule, skipping around 20% of reverse steps barely changes FID, meaning compute is used more efficiently across timesteps.

This does not fully solve the slow-sampling problem (we still use a long chain), but it makes the existing steps more informative and improves sample quality at the same number of steps.

Together, these changes yield:

- better NLL (mainly from learned variance + hybrid loss),

- better FID/sample quality at the same number of diffusion steps (from both the cosine schedule and better-trained variance).

2. The cosine schedule

You can find detailed explanations for the cosine schedule in Part 1 (DDPM).

TL;DR: The cosine schedule adds noise more slowly and evenly. As a consequence, the “amount of denoising” is spread more evenly across timesteps. The model sees a wider range of noise levels during training instead of many steps that are already pure noise. Training stability and sample quality » those of a linear schedule.

3. Learned variance in the reverse process

In DDPM, the reverse step is a Gaussian whose mean $\mu_\theta(x_t, t)$ is given by the network (via the predicted noise $\varepsilon_\theta$), and whose variance is fixed to the true posterior variance $\tilde{\beta}_t$:

$$p_\theta(x_{t-1}|x_t) = \mathcal{N}(x_{t-1}; \mu_\theta(x_t,t), \tilde{\beta}_t I)$$

Recall from Part 1 that the true posterior $q(x_{t-1}|x_t, x_0)$ is tractable when conditioned on $x_0$, and is a Gaussian with:

$$\tilde{\mu}_t(x_t, x_0) = \frac{\sqrt{\bar{\alpha}_{t-1}} \cdot \beta_t}{1 - \bar{\alpha}_t} x_0 + \frac{\sqrt{\alpha_t} \cdot (1 - \bar{\alpha}_{t-1})}{1 - \bar{\alpha}_t} x_t$$

$$\tilde{\beta}_t = \frac{1 - \bar{\alpha}_{t-1}}{1 - \bar{\alpha}_t} \beta_t$$

DDPM fixes the reverse-step variance $\tilde{\beta}_t$ and does not learn it.

Improved DDPM instead parameterizes the variance and learns it. Specifically:

$^3$

This is a geometric (log-linear) interpoloation, not an arithmetic one.

The vector $v$ is an output of the network and thus depends on both $x_t$ and $t$.

- It parameterizes $\Sigma_\theta(x_t, t)$ as a log-domain interpolation between $\beta_t$ and $\tilde{\beta}_t$ - i.e., the upper and lower bounds on the reverse-step variance. The network outputs a vector $v$ (one component per dimension), and the variance$^3$ is:

$$\boxed{\Sigma_\theta(x_t, t) = \exp\bigl(v \log \beta_t + (1 - v) \log \tilde{\beta}_t\bigr)}$$

At first, I took this formulation for granted and just agreed with what the authors proposed here. But after learning more about it, I realized how and why they were able to come up with this:

$\Sigma_\theta(x_t, t)$ need to stay within the range $[\beta_t$, $\tilde{\beta}_t]$, which is known to be the reasonable interval for the reverse-step variance. However, this range is very small, making direct prediction unstable.

Hence, the authors formulated it such that the network needs to predict/learn an unconstrained interpolation weight $v$, and map it to that range via a geometric interpolation in the log-space, which naturally keeps the output between the two bounds regardless of what $v$ the network outputs.

Now that we understand how $\Sigma_\theta(x_t, t)$ is formulated, the next question is, how do we train/learn it?

We need a loss that is sensitive to the specific choice of variance. $L_{simple}$ (the noise-prediction loss) is not, since it only depends on the predicted mean.

Hence, the VLB is the right signal because it directly measures, at each timestep $t$, how close the model’s reverse step $p_\theta(x_{t-1}|x_t)$ is to the true posterior $q(x_{t-1}|x_t, x_0)$. And this closeness depends on both the mean and variance.

Since both distributions are Gaussian, the KL divergence has a closed form$^4$:

$$ \mathrm{KL}\bigl( q(x_{t-1}\mid x_t,x_0) ,\big|, p_\theta(x_{t-1}\mid x_t) \bigr) = \tfrac{1}{2} \left[ \log \tfrac{\Sigma_\theta(x_t,t)}{\tilde{\beta}_t} + \tfrac{\tilde{\beta}_t + |\tilde{\mu}_t(x_t,x_0) - \mu_\theta(x_t,t)|^2}{\Sigma_\theta(x_t,t)} - 1 \right] $$

The better $\Sigma_\theta$ matches the true posterior’s variance (or spread) at each step, the smaller this KL term and the tighter the VLB.

We’ve talked about the variance, what about the mean? In principle, the same VLB term could also be used to update $\mu_\theta$, but the paper deliberately keeps the two separated:

a stop-gradient is applied to $\mu_\theta$ inside the VLB term, so gradients from the VLB only flow into $\Sigma_\theta$, which $\mu_\theta$ is trained solely by $L_{simple}$

this separation is important for stability - more on it in Section 4.

TL;DR: Why do we need to learn the variance instead of keeping it fixed?

A fixed $\tilde{\beta}_t$ is a one-size-fits-all choice: the same variance is used at every step regardless of what the model actually needs.

Learned variance lets the model adapt. It can use a smaller variance when it’s confident about the denoising direction, and a larger one when uncertain.

In general, this tighter fit to the data improves the log-likelihood and in practice often improves sample quality (measured by FID) as well.

4. Hybrid training objective

Recall in this section from Part 1 how DDPM training uses a simple loss:

$$\mathcal{L}_{\text{simple}} = \mathbb{E}[| \varepsilon - \varepsilon_\theta(x_t, t) |^2]$$

which corresponds to predicting the noise, and this loss actually gives good samples even though it’s not the full VLB.

Improved DDPM keeps the simple loss $L_{simple}$ for the mean $\mu_\theta$ (noise prediction), and adds the VLB so that the variance $\Sigma_\theta$ is also trained with the proper variational objective.

The total loss is a hybrid loss:

$$\boxed{\mathcal{L} = \mathcal{L}_{\text{simple}} + \lambda \mathcal{L}_{\text{vlb}}}$$

Here:

$\mathcal{L}_{\text{vlb}}$ is the negative variational lower bound, so we minimize $\mathcal{L}_{\text{vlb}}$.

The scalar $\lambda$ is small (e.g., 0.001) so that the main signal still comes from $\mathcal{L}_{simple}$ and training remains stable.

The paper defines $\mathcal{L}_{\text{vlb}}$ explicitly as a sum of per-step terms:

$$\mathcal{L}_{\text{vlb}} = L_0 + L_1 + \cdots + L_{T-1} + L_T$$

where:

$$L_0 = -\log p_\theta(x_0 | x_1)$$

$$L_{t-1} = D_{\text{KL}}\bigl[ q(x_{t-1}|x_t, x_0) | p_\theta(x_{t-1}|x_t)\bigr] \quad \text{for } t = 1, \ldots, T$$

$$L_T = D_{\text{KL}}\bigl[q(x_T|x_0) | p(x_T)\bigr]$$

- $L_0$ is the reconstruction term - how well the model recovers the clean image $x_0$ from $x_1$ (the slightly noised version). It is the only term that directly measures image reconstruction quality.

- $L_{t-1}$ is the denoising term at each step - the KL divergence between the true posterior $q(x_{t-1}|x_t, x_0)$ and the model’s reverse step $p_\theta(x_{t-1}|x_t)$. Since both are Gaussians, this has a closed form (see above). This is the term that provides the training signal for $\Sigma_\theta$.

- $L_T$ is the prior matching term - how close the fully noised $x_T$ is to a standard Gaussian $\mathcal{N}(0, I)$. This does not depend on $\theta$ at all (the forward process is fixed), so it is a constant during training and can be ignored for optimization.

During backprop, gradients from $\mathcal{L}_{\text{vlb}}$ would normally flow through both $\Sigma_\theta$ and $\mu_\theta$, essentially updating both. But the paper applies a stop-gradient to $\mu_\theta$ inside $\mathcal{L}_{\text{vlb}}$, which means: when computing the VLB loss, the network’s mean output $\mu_\theta$ is treated as a fixed constant. Gradients are blocked from flowing through it. So $\mathcal{L}_{\text{vlb}}$ can only update $\Sigma_\theta$.

The reason behind this design choice is actually so interesting:

Because $\mathcal{L}_{\text{vlb}}$ and $\mathcal{L}_{\text{simple}}$ would otherwise send competing gradient signals to $\mu_\theta$ - the two losses are not perfectly aligned, and letting both update the mean simultaneously destabilizes training.

The clean separation, then, looks like this: $\mathcal{L}_{\text{simple}}$ is solely responsible for teaching the network to predict noise (training $\mu_\theta$), and $\mathcal{L}_{\text{vlb}}$ is solely responsible for teaching the network what variance to use at each step (training $\Sigma_\theta$).

Section 2: Code

1. Improved DDPM official PyTorch implementation

The codebase for Improved DDPM is made public by the authors at: https://github.com/openai/improved-diffusion

It’s very simple to navigate, so I highly recommend you check it out.

2. My PyTorch implementation (coming soon)

If you’ve had the chance to look through my PyTorch implementation for DDPM here, then these files will remain unchanged: dataset.py, noise_scheduler.py, unet.py, utils.py.

The only files that need changing to implement new ideas proposed in Improved DDPM are: diffusion.py, ddpm.py, sample.py and train.py - and I’ve included either a suffix _improved or prefix improved_ to these files to make it clear.

Section 3: Recap & What’s Next

I will update this section when I’m done with my PyTorch implementation for Improved DDPM and have some generated samples from that model to showcase here.

After that, I’m looking forward to diving into the paper Denoising Diffusion Implicit Models (DDIM) (Song et al., 2021), which dramatically speeds up sampling from 1000 steps to as few as 50. So far, my experience with generating images from diffusion models has been painfully slow.

Useful Resources

Theory

Code

Citation

Le, Nhi. "Diffusion Models - Part 2: Improved DDPM". halannhile.github.io (March 2026). https://halannhile.github.io/posts/improved-ddpm/

BibTeX:

@article{nhi2025ddpm,

title = {Diffusion Models - Part 2: Improved DDPM},

author = {Nhi},

journal = {halannhile.github.io},

year = {2026},

month = {March},

url = "https://halannhile.github.io/posts/improved-ddpm/"

}